Research & Engineering Projects

Robotics manipulation, learning-based control, and human-robot interaction

End-to-end learning from human demonstrations using deep-NN and non-linear dynamical systems

Developed visuomotor policy using deep neural networks and dynamic movement primitives for planar 2D manipulation tasks. The model was trained end-to-end from human demonstrations in cluttered scenes, using RGB images as input and generating the parameters of the dynamical system as output which produced the robot trajectory. Tested in complex real-world planar tasks (stem unveiling and grasping in clutter).

Key Contributions

- Novel visuomotor policy (Resnet-DMP): Integrates deep residual networks (ResNet18) with Dynamic Movement Primitives (DMP) to learn motion patterns from RGB images

- End-to-End Parameter Inference: Automatically extracts model parameters (weights, initial & target positions) directly from raw visual input without external perception

- Optimized Learning: Uses a re-parameterization of DMP to reduce non-linearity, computation effor and training error

- Validation in complex real-world planar tasks: Stem Unveiling (85% success) and Grasping in Clutter (71.25% success)

- Data-Efficient Learning: Achieved high performance with minimal human demonstrations (16) by utilizing a hybrid manual and artificial data augmentation strategy

Technologies

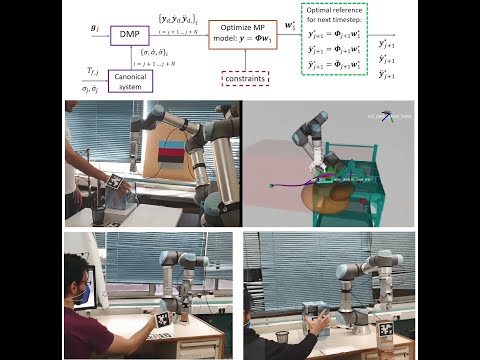

A novel framework for generalizing dynamic movement primitives under kinematic constraints

Developed an MPC-like optimization framework for enforcing in real-time kinematic constraints on nonlinear dynamical systems trained from human demonstrations. Showcased superior performance compared to repelling potential fields and classic MPC approaches. Validated on handover and pick-and-place tasks, under posiition, velocity, acceleration and obstacle avoidance constraints.

Technologies

A human inspired handover policy using gaussian mixture models and haptic cues

Developed a novel framework that encodes human demonstrations using Gaussian Mixture Models (GMM), while satisfying the necessary conditions to ensure global asymptotic stability at the target. An object load transfer strategy based on haptic cues is also integrated to ensure stable grasp release. The strategy was validated through both V-REP simulations and real-world experiments involving various objects and human receivers.